Has this ever happened to you? A senior manager asked me if a software application could be ported from Microsoft Access to Salesforce.com. The business unit workflows are partly automated and partly manual today. I did a top-level analysis and suggested that the current application could be ported fairly quickly. The project got approved and […]

Guest Post: 5 Tips for Making the Dreaded All-Day Meeting Productive and Fun

Major projects often require half-day or all-day meetings to get everyone in sync and plan the high-level steps needed to finish the effort. You could find yourself leading such a meeting and you might dread it. It doesn’t have to be painful. You might actually enjoy it. The grunts and sighs usually begin early in […]

Being Agile Means Changing Corporate DNA

Successful companies have well-defined business models. They know what works and what doesn’t. They have a formula for generating revenue and controlling expenses. It’s all good — until the business model needs to change. Why would a successful company want to change its business model? New product competition, disruptive technologies, lower-cost competitors, marketplace demands, rising […]

Just-In-Time Planning Reduces Waste and Improves Software

The best agile software development teams use just-in-time planning. It’s a simple concept, really, but not-so-simple to put into practice. It has roots in product manufacturing and is embraced by companies following a kanban approach to building products. You’ve probably heard of just-in-time inventory management. It’s a set of techniques for minimizing both raw materials […]

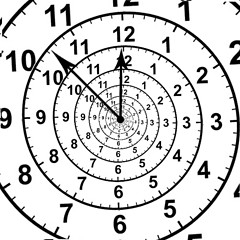

Flight Times and Project Timelines Have Something in Common

Have you ever noticed how predicting the travel time of an international flight covering 4,000 miles is often more accurate than predicting the time to commute to your office? The same is true of software development projects — it can be easier to predict longer term schedules. Here’s why. We’ll ignore catastrophic situations. Some flights […]

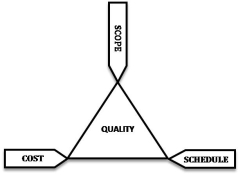

You Can Have It Good, Fast or Cheap. Pick Three!

Most software developers have heard the line “You can have it good, fast or cheap. Pick two.” This idea relates back to the iron triangle of software development. The usual idea is that quality is fixed while time, money and/or scope can change. In reality, all four elements are changeable. We simply don’t like to […]

More Frequent Software Deployments Accelerate the Feedback Cycle

If your software development team practices Scrum, are you required to deploy software only once during a sprint? That practice is clearly defined by Scrum, but is it a requirement? If you want to deploy daily, do your sprints have to be 1-day long? I don’t believe so. Software teams using Scrum may deploy software […]

Happy Agile People Build Good Agile Teams

2013 is winding down and businesses around the globe are making plans (or have made them) for 2014. Most of those plans will be poorly defined and lacking in clear objectives. Rather than focus your energy on things beyond your control, focus on you. Would you like your software development team and your company to […]

What Does It Take To Be Agile?

What does it take for your software development team to be agile? Only you and your team can answer that question. I can offer my opinion about being agile but your circumstances may vary. The characteristics that one person requires for agility will be different from what someone else in a different situation requires. For […]

Guest Post: BYOD: How Employees and Employers Benefit

The BYOD movement is growing among businesses in the U.S. and globally. The ability of employees to bring their personal devices to work has many benefits that employers (and employees) new to the movement need to recognize and weigh before implementing BYOD in their organizations. Some of the key benefits common to bring-your-own-device programs are […]

Phased Agile Development Builds Bridges

My last five posts have covered the topic of using phases in agile software development similar to the phases of the Rational Unified Process (RUP). So why phases? Neither the Agile Manifesto nor Scrum make any reference to phased development. If you use phases, are you really doing agile development? (If you haven’t read the […]

Transition: More Ideas for Using RUP Phases in Agile Software Development

This is the fifth in a series of posts about using RUP phases in agile software development. (Here is a link to the last post in case you missed it.) The Transition Phase is next. If your agile software project makes it through my interpretation of using the Inception, Elaboration and Construction phases, you’ll likely […]

Construction: Ideas for Using RUP Phases in Agile Software Development

This is the fourth in a series of posts about using RUP phases in agile software development. (Here is a link to the last post in case you missed it.) Let’s continue. So you’ve completed the Inception and Elaboration phases. That means your team and the business stakeholders have a good, solid understanding of what […]

Elaboration: An Approach to Using Phases in Agile Software Development

This is third in a series of posts discussing how project phases as defined in RUP (the Rational Unified Process) can be used in agile software development. My last post covered the Inception Phase. Now it’s time to think about the Elaboration Phase. If your project made it through the inception phase without getting redirected […]

Inception: An Approach to Using Phases in Agile Software Development

Building software applications using agile techniques is no guarantee of success. Why? While waterfall teams spend too much time at the outset trying to nail down business requirements, agile teams may also invest too much time on story backlogs. Either way, the teams try to capture and document what the business thinks it wants, but […]

Project Phases Can Be Used In Agile Development Too

Big companies like highly-structured approaches to software development. Why? They’re trying to control and reduce risk. Despite the fact that their real-world experiences clearly demonstrate lack of success, they keep trying. Some of these companies are adopting approaches that apply more rigor and structure to agile development. The two most discussed (and controversial) approaches are […]

You Can’t Reuse or Recycle Wasted Time

At times, seemingly inexplicable situations are simple to understand once you wrap your head around them. For example, I’m often astonished at how long simple software changes take from the time the change is proposed by the business to the time it’s deployed. Here’s a scenario I see a lot at company after company. Hard […]

Don’t Let Your Team Become Complacent and Predictable

Many managers tend to assemble software development teams with the goal of keeping the team together — release after release, project after project. The logic is that the team’s performance will improve as the team members get to know and understand each other. But is this the best approach to managing teams? It’s true that […]

Do You Know What’s Wrong With Your Software Development Process?

Be honest. No software development process is flawless — not one. Every process, regardless of approach, has something wrong with it. The reason is simple. Different approaches, and various implementations of those approaches, always focus on a few areas deemed critical to success. Those critical areas vary by company and development group. The narrow focus […]

Every Failed Project Offers Lessons Learned – Healthcare.gov

The Healthcare.gov fiasco has received more than its fair share of attention and criticism. By now, everyone knows that the rollout failed — catastrophically. It gives everyone involved in any aspect of software development a bad rap. For that reason, we should draw a few lessons from this debacle and try to avoid getting ourselves […]